I heard an interesting statistical example in class the other day and wanted to do a deep dive into it. Bear with me and explore the wonders of ground beef through the student-t distribution.

Experimental Background

The experiment arrives as follows.

Say you walk into a supermarket and check out the ground beef. It says 85% lean, in a package that weighs about 1.5 lbs. But how sure are you of that 85% ? You’re paying more than you would for the 80%, and less than the 90%. So you want to get your money’s worth.

What can you tell about the beef, and what can you infer based on the measurements you’re taking?

Sample characteristics

In the sample case, beef had a percent lean (85%) and a weight (1.5lb) The percent lean is a simplification; $0.85 * 1.5lb = 1.275lb$ meat, and $0.225lb$ fat. The percentage is the most important value here - but how can you tell?

Would you be able to find the true percent lean in the meat? This result gives every value as 229 grams per metric cup for every beef value ranging from 70 - 95%. Density is a useless value.

Maybe cook times? Something with more fat would be quicker to smoke.

In searching for cook times, I found this autogenerated site which recommends cooking ground beef for 20 mins in a plastic bag inside a 350$\degree$ oven. Not a great idea.

I found this paywalled article from 1971 on cooking the meat mixture in an akaline solution, which allows for separation of fat and meat. That could be a possible solution but probably renders the meat you just bought as inedible.

A friend suggested varying cook times - have the same weight of ground beef, use a precise oven, and cook until an instant-read thermometer measures 155 degrees internally. Of course ovens introduce their own variation at this stage. I’d imagine there is a small correlation here that may or may not have enough time between meat types to give meaningful results. You’d have to do some sort of graudated error calculation to calculate the sample percent from meat, even assuming the time relates linearly to the amount of fat in your food.

Texas A&M has this table to show varying levels of protien, fat, nutrients, vitamins, and minerals for given 3oz cuts of meat.

| 73% lean | 80% lean | 85% lean | |

|---|---|---|---|

| Calories | 248.00 | 235.00 | 213.00 |

| Protien (g) | 22.84 | 24.38 | 24.85 |

| Total Fat (g) | 16.83 | 14.52 | 11.81 |

| Iron (mg) | 2.27 | 2.18 | 2.37 |

| Zinc (mg) | 4.99 | 5.35 | 5.51 |

The best resource I found for beef measuring was this video by Penn State University. They show a method used by meat grinders to calculate percentages of grinds for ground meat, then show an impressive experimental setup to separate the fat from the cooked meat. It uses an acidic solution - maybe the 1971 paper wasn’t so off.

The method for grinding is called the Pearson Square which is pretty common in dairy and wine science. Butchers take a higher fat meat and combine it with a lower fat meat to get the target percentage. This would assume that the cuts themselves have an intrinsic fat content, which is a little bit of a letdown.

Image from the PSU Video showing separated fat. Looks like my attempt at taco meat or bolognese.

All this to say, you really can’t measure the sample fat content of a given ground beef product. But what happens when you take many samples?

Sampling characteristics

This has less to do with the pieces of ground beef themselves but more with the characteristics of taking multiple samples of meat. Let me sum up the findings so far:

-

A sample of ground beef has a lean percentage associated with it

-

There is no good option to find the true mean lean percentage

-

The population of ground beef samples are large. Not the amount of samples you can take, because time is money and it’s not worth sacrificing your time or your money to buy 30+ pieces of ground beef then boil them in acid to gain a meaningful, error-prone data point.

Besides, you should be grinding your own meat. (Right after the video ends, he asys to grind it yourself).

An option to the sample mean

Contuining with the math…

Sampling Math

(Much of this is derived from the wikipedia page on student-t distribution)

First, since the population is large, is is normally distributed with $X \sim \mathcal{N}(\mu,\,\sigma^{2})$

This shows taking each value and finding the mean is:

$\bar{X} = \frac{1}{n} \sum_{i=1}^{n} X_i $

Finding the variance is just as easy, but includes a [Bessel-corrected]https://en.wikipedia.org/wiki/Bessel%27s_correction) (just go with me on this) sample variance:

$S^2 = \frac{1}{n-1} \sum_{i=1}^{n} (X_i - \bar{X})^2 $

Simply taking the difference between the mean over the sample variance is still random with expected mean 0 and variance 1:

$\frac{\bar{X} - \mu}{\sigma / \sqrt{n}} $

Replacing $\sigma$ with $S$, since it describes the sample instead of the population

$\frac{\bar{X} - \mu}{\S / \sqrt{n}} $

Take a look at that expression. $\mu$ is our only unknown, since we cant measure the true lean mean percentage in ground beef. If you separate the numerator and denominator both are independent - variation in the sample lean percentage does not affect the variance. To prove this, the covariance finds:

$Cov(\bar{X}_i, X_i - \bar{X}) = \frac{\sum{(\bar{X}_i - \bar{X})*(X_i - \bar{X} - \bar{X}_i + \bar{X})}}{N-1}$

$Cov(\bar{X}_i, X_i - \bar{X}) = 0$

Or, you could remember that $\bar{X}_i$ and $X_i - \bar{X}$ are both linear combinations of indepdent, identically distributed (iid) random variables. They both come from the population mean (iid) and are linear combinations so they still remain iid.

What’s the big idea?

The only “unknown”, ie the variable we can’t determine, is $\mu$, the true lean population percentage mean. We cannot possibly determine, out of all 85% ground meat packages, what the true percentage is.

But we can try confidence intervals.

The Student-T Distribution

The value $n$ gives us our insight into how the sample mean may change. If we take more values, we may find greater evidence towards the true sample mean percentage. The student-t distribution was designed exactly for these purposes when the samples are small and true mean is unknown.

Many more pages of theory later arrives at the Student-T Table given Confidence Values for the t-value $t_{\alpha,v}$.

The confidence interval is then

$\bar{X_n} \pm t_{\alpha,v} \frac{S_n}{\sqrt{n}} $

Theoretical Application

Author’s note: I’m very close to testing this theory myself but I grind my own meat because it’s cheaper

I go to the supermarket. For me, it’s Stop and Shop. I buy my first ground beef package (85%, for my bolognese), but want to be confident in the quality of pre-ground beef I’m buying. So following the equation:

$\bar{X_n} \pm t_{\alpha,v} \frac{S_n}{\sqrt{n}} $

With $t_{\alpha,v} = 6.314$ from a t-distribution lookup table with $n = 2$. I’m assuming the variance is 5% since ground beef is graded at 5% intervals - with my small amount of samples that is theoretically the highest it could go.

My interval becomes:

$0.85 \pm 6.314 \frac{0.05}{2} $

or

$[0.6268, 1.0732]$

So to be quite honest I have no idea what I’m buying. I could be buying 107% lean meat, or 63%. But what if I keep buying, assume the same variances, and try for a more accurate estimate?

Simulation Extension

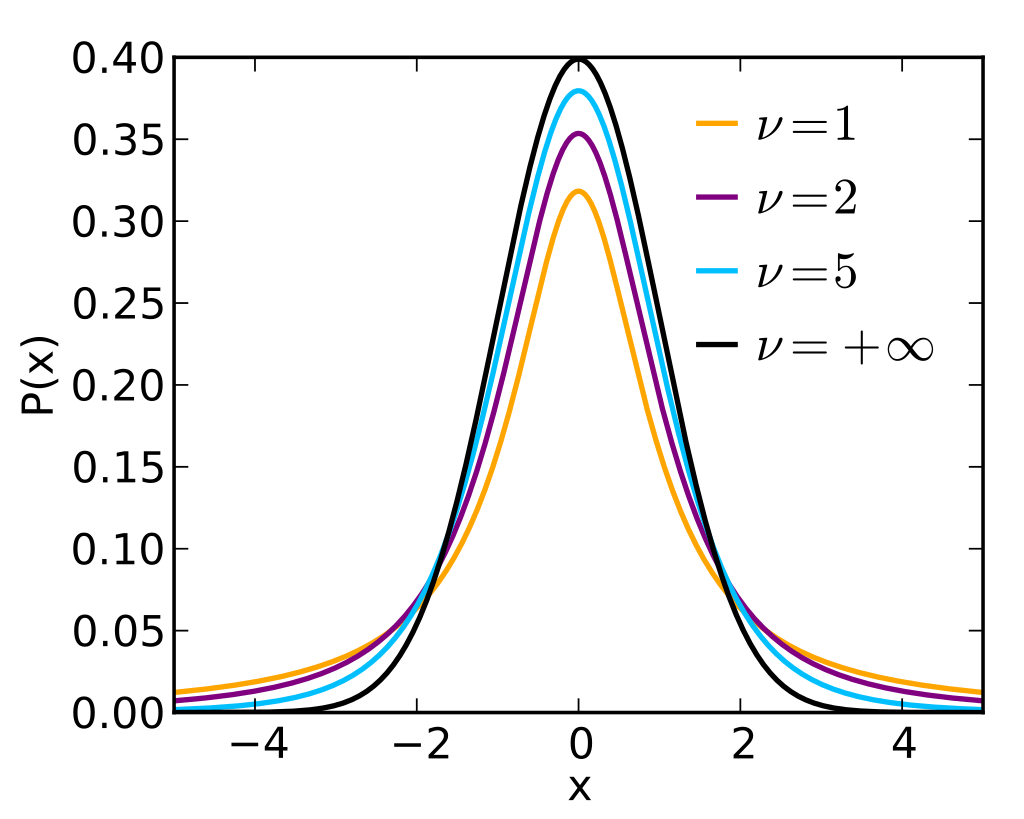

The student-t distribution changes shape as you increase the sample sizes:

Student T PDF from Wikipedia

If you crunch the numbers, the furthest $t_{\alpha,v}$ point with a 90% interval is 1.645. This makes the maximum interval for 85% $[ 0.7918 0.9082]$. This doesn’t mean the sample you just got contains anywhere from 79 to 91% ground beef; rather it says the true percetage of meat in 85% ground beef lies between 79% to 91%.

I imagine my estimate for sample variance is off but until I’m boiling ground beef in a test tube, I can’t determine the true percentage myself.

It’s important to know here that the true variance is unknowable. That’s the entire point of the student t distribution.

Conclusion (?)

This was a fun (or at the very least, interesting) dive into sampling for the student-t distribution. I am continuing to learn more about this example and will probably update after I ask my butcher, my stats teacher, and my ground meat that’s grown a mind of its own.